Our team attended the AI & Digital Construction track at New York Build last week. The room was packed. The conversations were urgent. And the gap between firms moving fast on AI and firms still figuring out where to start is getting wider. Here’s what we learned.

Table of Contents

The Javits Center was buzzing. Our team spent the day at NY Build 2026’s AI & Digital Construction track, attending panels on smart buildings, enterprise AI adoption, and data center coordination, plus a workshop from STV’s new Digital Advisory practice.

If there was one theme that cut across every session, it was this: the industry is taking AI seriously now. Not as a future consideration. Not as an experiment. As infrastructure.

Turner Construction has 8,000 AI licenses deployed enterprise-wide. Architecture firms are building custom tools in days instead of months. VDC teams are connecting AI directly to their coordination workflows.

But there’s a growing gap. The firms treating digital transformation as a strategic priority are pulling away from the firms that are still in the “we should probably look into that” phase.

Here’s what we learned – and what it means for how construction teams work.

Turner’s Enterprise AI Rollout Sets the Benchmark

Ben Ferrer, Turner Construction’s AI Lead, walked through their adoption journey. They started with 150 ChatGPT Enterprise licenses 2.5 years ago. Today they have over 8,000 licenses across the organization. They’re now rolling out Claude and Gemini to give teams access to multiple foundation models depending on the task.

His key insight: “You can’t just do it ground up, it won’t get very far. You need leadership, top-down support.”

This matches what we’re seeing with our clients. AI adoption that starts at the user level – a few people experimenting on their own – hits a ceiling quickly. IT blocks access. Security raises concerns. Budget conversations stall. The early adopters get frustrated and move on.

The firms making real progress are the ones where leadership decided this matters. Where the CTO or the VP of Operations said: “This is infrastructure now. We’re investing in getting it right.”

Ben described the friction Turner faced early on. Their IT and security teams had valid concerns about data governance, model accuracy, and vendor lock-in. He didn’t dismiss those concerns. He worked through them. Leadership gave him the runway to figure it out.

Once that alignment happened, adoption accelerated. Teams built their own use cases. Support requests turned into feature requests. The culture shifted from “should we use this?” to “how do we use this better?”

That’s the pattern. Top-down commitment. Structured rollout. User training. Validation frameworks. Then scale.

“Enabled For” – Design Buildings Ready for Intelligence

Ayse Polat, Turner’s VDC Director, introduced a concept during the smart buildings panel that we haven’t heard phrased quite this way before: buildings need to be “enabled for” the future, not retrofitted after the fact.

She was talking about digital twins and smart building systems. The idea: if you want a building to support IoT sensors, predictive maintenance, or autonomous operations, you have to plan for that capability during design and construction. Trying to add it later costs exponentially more – and often doesn’t work as well.

Greg Halpin from ST Engineering reinforced the point: “When you’re in the design phase, you can put this in for pennies on the dollar. You’re basically future-readying or future-proofing that building.”

This principle extends beyond smart buildings. It applies to every construction project.

You can’t coordinate what you don’t understand. You can’t plan for future renovations if your as-built documentation is outdated. You can’t retrofit intelligence into a building that wasn’t designed to receive it.

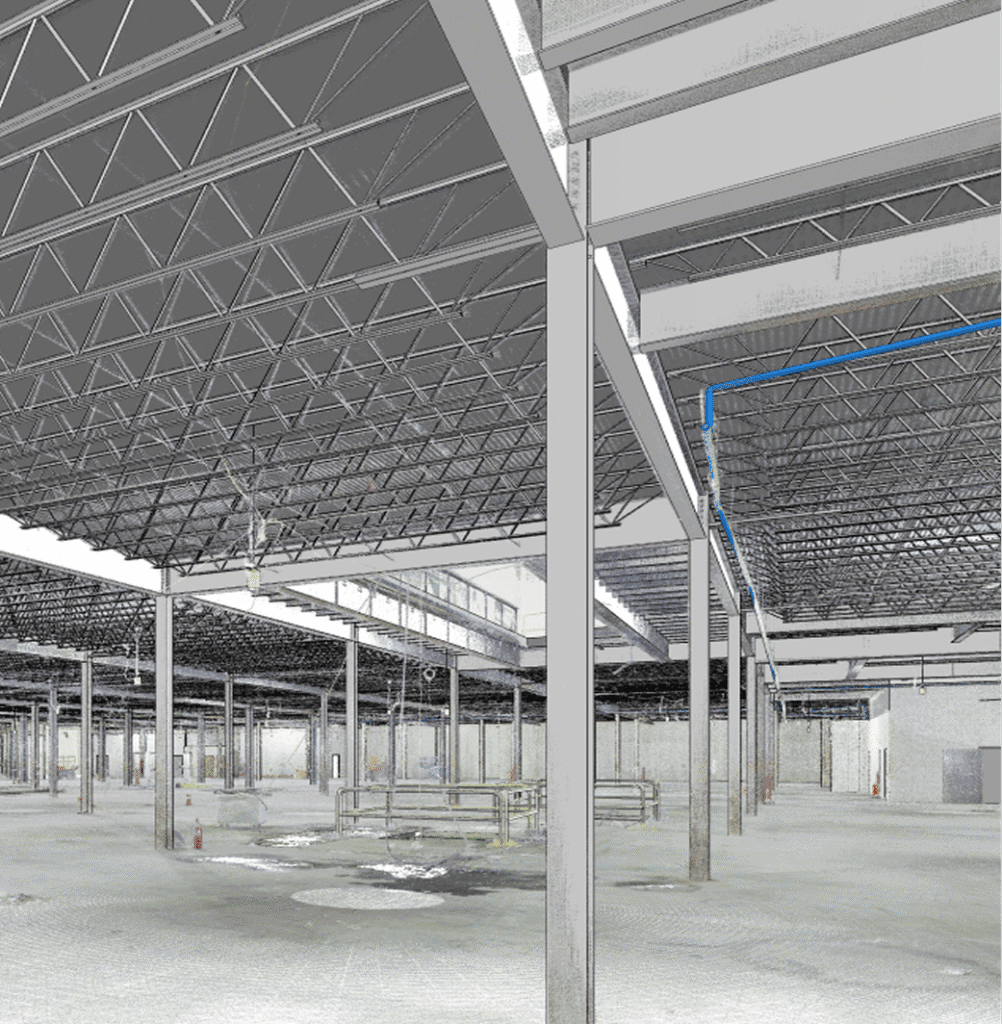

Reality capture early in the process – before design is locked, before construction starts – creates the foundation for everything that comes next. When you start with verified conditions, you’re building on data instead of assumptions.

That’s the “enabled for” approach applied to coordination: design your process around accurate information from day one, or spend exponentially more fixing it later.

Digital Twins Are Misunderstood

Ayse Polat made a distinction during her panel that matters for how the industry talks about this technology:

“Sometimes we get companies say, oh, we create digital twins. How do you do it? Oh, we laser scan the building. Okay, that’s awesome, but it’s not a digital twin.”

She outlined a maturity spectrum:

- Descriptive twin: Static information about the building (geometry, materials, systems)

- Informative twin: Real-time data feeds from sensors and building systems

- Predictive twin: Analytics that forecast performance and maintenance needs

- Autonomous twin: The building makes operational decisions for itself

Her assessment: we’re not autonomous yet, but we’ll be there in 10 years.

This framing is important. Scanning captures the physical reality of a building. It creates the geometric foundation. But what happens next – how that data gets used for coordination, how it connects to building systems, how it enables predictive maintenance – is what determines whether you’re building toward a true digital twin or just creating a 3D snapshot.

The point cloud is the foundation. The digital twin is what you construct on top of it.

How Are You Connecting AI to Your Design Tools?

One area we didn’t hear much about at NY Build: how firms are connecting AI directly to their design environments.

Most of the conversation focused on plugins built for Revit, custom tools developed in-house, or workflow automation. All valuable work. But the next step – letting AI query your models, read your coordination data, navigate your clash reports without manual exports – that conversation is still emerging.

We’ve been exploring that direction for the past year. Building connections so the AI can interact with Revit, ACC, and Navisworks directly. Not just analyzing data that’s been exported, but working inside the environment where coordination happens.

We’re curious what approaches others are taking in this space.

Vincent Poon from Structure Tone mentioned building a machine learning takeoff plugin for Revit a decade ago – before ChatGPT existed – that could analyze partition takeoffs using natural language queries. His result: 2% more accurate than human estimators, completed in minutes instead of days.

Eric Hull from Mancini Duffy described his team building custom AI workflows in days rather than months. “The biggest workflow win is the ability for people in the process to build the tools that help their workflows. You can build that tool in a day or less and roll it out to a group.”

The underlying trend: foundation models (Claude, ChatGPT, Gemini) are making it possible for small teams to build capabilities that used to require enterprise software vendors and multi-year roadmaps.

Ben Ferrer said it plainly: “SaaS is dying.”

His point: when teams can build their own tools faster than they can wait for vendor updates, the economics of enterprise software change. The firms that adapt to this shift will move faster. The firms waiting for vendors to ship features will find themselves trailing.

Data Has to Come First

At an AIA-accredited workshop, Nidhi Sekhar from STV‘s Digital Advisory practice described a pattern we see on projects all the time:

“A lot of times what tends to happen is that a process happens and then the data that’s collected happens as an afterthought rather than being part of the process.”

That disconnect is why buildings don’t match their drawings. Why coordination issues surface during construction instead of during planning. Why project data isn’t structured for handoff to facility management.

STV’s consulting framework (Investigate → Integrate → Innovate) is designed to move organizations from reactive problem-solving to proactive, insight-driven decision making. The starting point: standardize data structures, define ownership, validate inputs, build reporting on top of it.

We’ve been doing exactly this on coordination projects. Our Taxonomy Standards and BIM Execution Plan process embed data standards from the beginning. The scans capture reality. The coordination workflow makes it actionable.

Data-first, not data-last.

This also showed up in the data centers panel. Dan Silva from Simpson Gumpertz & Heger described the coordination challenge in high-density MEP environments: hundreds of penetrations through foundation walls, below-grade conduits, rooftop equipment across multiple trades.

“We really have to make sure we’re designing and installing high-performing building enclosure systems. One of the challenges with data centers is all of the penetrations that come into the building. Those are always the problem areas.”

A single roof leak can shut down a data center. In an environment where speed to market is everything and downtime costs millions, coordination before construction isn’t optional.

It’s the whole game.

The Honest Conversation About Jobs

Multiple panels touched on the “AI won’t take your job” narrative. The line we kept hearing: AI won’t take your job, but someone who knows AI will.

We’re not sure that’s the full picture.

If one person can do the work of ten, what happens to the other nine? That’s a real question the industry needs to address – not just for engineering and architecture teams, but for field labor too.

Technology creates efficiency. Efficiency changes headcount. Acknowledging that reality isn’t fear-mongering. It’s planning.

The most honest take came from Ben Ferrer at Turner: AI is a tool that makes your team more capable. But being more capable with fewer people is still being fewer people. The industry needs to have a real conversation about what that transition looks like and how we support people through it.

Not everyone will adapt at the same speed. Not every role will translate cleanly into an AI-augmented version of itself. Pretending otherwise doesn’t help anyone prepare.

What This Means for How Teams Work

New York Build 2026 made something clear: the AEC industry is moving fast on AI. Turner has 8,000 licenses. Mancini Duffy is building custom tools in days. Structure Tone has been experimenting with machine learning for a decade.

But the gap between firms that are treating this as strategic infrastructure and firms that are still in the “we should probably look into that” phase is widening.

We’ve been working with AI tools daily for three years. The learning curve is real. The productivity gains are real. The questions about what this means for teams and workflows are real too.

The firms that are moving fast aren’t necessarily the ones with the biggest budgets or the most people. They’re the ones where leadership decided this matters and invested in figuring it out.

If your firm is trying to figure out where to start, we’d be happy to share what we’ve learned.